Summary

Recent advances in remote sensing and Artificial Intelligence (AI), allows to quantify field scale phenotypic information accurately and integrate the big data into predictive and prescriptive management tools. This program focuses on the use of recent technological advances in remote sensing and AI to improve the resilience of agricultural systems, which present a unique opportunity for the development of prescriptive tools needed to address the next decade’s agricultural and human nutrition challenges.

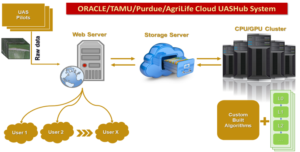

Data Portal for bigdata processing and communication

Currently, perhaps the most prominent issues slowing the adoption of UAS technology in agricultural settings is the lack of integrated software ecosystems available to the end-user. Both good quality data collection & visualizing, analyzing, and interpreting data is critical in the successful adaption of UAS technologies for crop management. Still, little focus has been placed on these topics so far. To address this challenge and avoid the need to transfer large volumes of data over the network among scientists, we propose to leverage a partnership with ORACLE to bring an already-developed UASHub (Texas A&M AgriLife-Research/Texas A&M University-Corpus Christi/Purdue University) to the cloud environment. The main goal is to integrate raw and post-processed UAS imagery data for sharing, visualization, analysis, and interpretation, such that it can be used as a platform to facilitate data sharing and collaboration among users.

Currently, perhaps the most prominent issues slowing the adoption of UAS technology in agricultural settings is the lack of integrated software ecosystems available to the end-user. Both good quality data collection & visualizing, analyzing, and interpreting data is critical in the successful adaption of UAS technologies for crop management. Still, little focus has been placed on these topics so far. To address this challenge and avoid the need to transfer large volumes of data over the network among scientists, we propose to leverage a partnership with ORACLE to bring an already-developed UASHub (Texas A&M AgriLife-Research/Texas A&M University-Corpus Christi/Purdue University) to the cloud environment. The main goal is to integrate raw and post-processed UAS imagery data for sharing, visualization, analysis, and interpretation, such that it can be used as a platform to facilitate data sharing and collaboration among users.

UAS based High throughput phenotyping (HTP) system for breeding or research applications

Recent advances in Unmanned Aerial System (UAS) and sensor technology are now making it possible to accurately assess overall crop growth and health with fine spatial and high temporal resolutions data previously unobtainable from traditional remote sensing platforms, at a relatively low cost. Combined with state-of-the-art image processing, visualization , and geospatial data analysis, UAS offers an innovative opportunity for the development of high throughput phenotyping system and precision agriculture applications including single plant phenotyping of some crops. We have developed UAS platforms and standardized protocols in data collection and processing to obtain phenotypic data on several traits over entire

Recent advances in Unmanned Aerial System (UAS) and sensor technology are now making it possible to accurately assess overall crop growth and health with fine spatial and high temporal resolutions data previously unobtainable from traditional remote sensing platforms, at a relatively low cost. Combined with state-of-the-art image processing, visualization , and geospatial data analysis, UAS offers an innovative opportunity for the development of high throughput phenotyping system and precision agriculture applications including single plant phenotyping of some crops. We have developed UAS platforms and standardized protocols in data collection and processing to obtain phenotypic data on several traits over entire  growing season for various crops such as cotton, wheat, sorghum, corn, sugar/energy cane, tomato, peanut, and potato. The UAS based HTP system can provide high quality data so that the plant breeders and research scientists can conduct advanced analysis for breeding and research applications. Effective implementation of HTP tools has the potential to increase the size, efficiency, and genetic gain of a breeding program.

growing season for various crops such as cotton, wheat, sorghum, corn, sugar/energy cane, tomato, peanut, and potato. The UAS based HTP system can provide high quality data so that the plant breeders and research scientists can conduct advanced analysis for breeding and research applications. Effective implementation of HTP tools has the potential to increase the size, efficiency, and genetic gain of a breeding program.

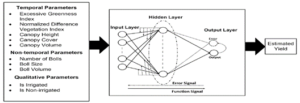

Machine learning based cotton yield estimation using UAS data

With a total aerial coverage of approximately 6 million acres, cotton is amongst one of the leading cash crops in the state of Texas, which has led to an upsurge of the cotton breeding research in the state. One of the main objectives of cotton breeding research is to select genotypes suitable for specific environments. The main objective of this research is to develop a framework that combines UAS derived canopy attributes including canopy cover, canopy height, canopy volume, NDVI, ExG, cotton boll traits along with qualitative information to predict cotton yield to facilitate automatic cotton genotype selection. A machine learning framework based on ANN architecture is adopted and provided low residual values with

With a total aerial coverage of approximately 6 million acres, cotton is amongst one of the leading cash crops in the state of Texas, which has led to an upsurge of the cotton breeding research in the state. One of the main objectives of cotton breeding research is to select genotypes suitable for specific environments. The main objective of this research is to develop a framework that combines UAS derived canopy attributes including canopy cover, canopy height, canopy volume, NDVI, ExG, cotton boll traits along with qualitative information to predict cotton yield to facilitate automatic cotton genotype selection. A machine learning framework based on ANN architecture is adopted and provided low residual values with  predicted yield values close to the observed yield values (R2 ~ 0.9). The neural network models can implicitly detect complex nonlinear relationships between independent and dependent variables in a complex system without requiring explicit mathematical representations. This study revealed that UAS derived phenotypic data can be combined within a machine learning framework to provide a reliable estimation of crop yield and provide effective understanding for crop management.

predicted yield values close to the observed yield values (R2 ~ 0.9). The neural network models can implicitly detect complex nonlinear relationships between independent and dependent variables in a complex system without requiring explicit mathematical representations. This study revealed that UAS derived phenotypic data can be combined within a machine learning framework to provide a reliable estimation of crop yield and provide effective understanding for crop management.

In-season Management System for Sustainable Cotton Production

Spatial variation within the field with respect to cotton growth, availability, and need results in potential over and under application of chemical inputs. Overapplication leads to increased chemical runoff and possibly decreased profit for the producer. At the same time, underapplication may not fulfill the demand of the crop. We integrate UAS and satellite based remote sensing, crop modeling, and Artificial Intelligence (AI) to develop in-season crop management system to make real time decisions of time, amount, and location to apply optimum inputs and maximize profits in cotton production. Satellite imagery calibrated with weekly UAS data will generate huge database to develop Artificial Intelligence (AI) based models and analytical tools to define in-season management zones to apply nutrients and growth regulators and predict cotton yield.

Spatial variation within the field with respect to cotton growth, availability, and need results in potential over and under application of chemical inputs. Overapplication leads to increased chemical runoff and possibly decreased profit for the producer. At the same time, underapplication may not fulfill the demand of the crop. We integrate UAS and satellite based remote sensing, crop modeling, and Artificial Intelligence (AI) to develop in-season crop management system to make real time decisions of time, amount, and location to apply optimum inputs and maximize profits in cotton production. Satellite imagery calibrated with weekly UAS data will generate huge database to develop Artificial Intelligence (AI) based models and analytical tools to define in-season management zones to apply nutrients and growth regulators and predict cotton yield.

Publications

- A. Chang, J. Anciso, C. Avila, J. Enciso, J. Landivar, M. Maeda, J. Jung, J, Yeom. 2021. Unmanned Aircraft System (UAS) Based High Throughput Phenotyping (HTP) for Tomato Yield Estimation. Journal of Sensors, 2021 (in press).

- A. Chang, J. Yeom, J. Jung, J. Landivar, “Comparison of canopy shape and vegetation indices of citrus trees derived from UAV multispectral images for characterization of citrus greening disease”, Remote Sensing, 12(24), 2020.

- J.Jung, M. Maeda, A. Chang, M. Bhandari, A. Ashapure, J. Landivar, “The potential of remote sensing and artificial intelligence as tools to improve the resilience of agriculture production systems”, Current Opinion in Biotechnology, vol. 70, pp. 15-22, 2020.

- A. Ashapure, J. Jung, A. Chang, S. Oh, J. Yeom, M. Maeda, A. Maeda, N. Dube, J. Landivar, S. Hague, W. Smith, “Developing a machine learning based cotton yield estimation framework using multi-temporal UAS data”, ISPRS Journal of Photogrammetry and Remote Sensing, vol. 169, pp. 180-194, 2020.

- S. Oh, A. Chang, A. Ashapure, J. Jung, N. Dube, M. Maeda, D. Gonzalez, J. Landivar, “Plant Counting of Cotton from UAS Imagery Using Deep Learning-Based Object Detection Framework”, Remote Sensing, 12(18):2981, DOI: 10.3390/rs12182981, 2020.

- M. Bhandari, A. Ibrahim, Q. Xue, J. Jung, A. Chang, J. Rudd, M. Maeda, N. Rajan, H. Neely, J. Landivar, “Assessing winter wheat foliage disease severity using aerial imagery acquired from small Unmanned Aerial Vehicle (UAV)”, Computers and Electronics in Agriculture, 176:105665, DOI: 10.1016/j.compag.2020.105665, 2020.

- A. Chang, J. Jung, M. Maeda, J. Landivar, H. Carvalho, J. Yeom, “Measurement of Cotton Canopy Temperature Using Radiometric Thermal Sensor Mounted on the Unmanned Aerial Vehicle (UAV)”, Journal of Sensors, 2020:1-7, DOI: 10.1155/2020/8899325, 2020.

- A. Ashapure, J. Jung, A. Chang, S. Oh, M. Maeda, J. Landivar, “A comparative study of RGB and multispectral sensor based cotton canopy cover modelling using multi-temporal UAS Data,” Remote Sensing, 11(23):2757, DOI: 10.3390/rs11232757, 2019.

- J. Yeom, J. Jung, A. Chang, A. Ashapure, M. Maeda, A. Maeda, J. Landivar, “Comparison of Vegetation Indices Derived from UAV Data for Tillage Treatment in Agriculture,” Remote Sensing, 11(13):1548, DOI: 10.3390/rs11131548, 2019.

- A. Ashapure, J. Jung, J. Yeom, A. Chang, M. Maeda, A. Maeda, J. Landivar, “A novel framework to detect conventional tillage and No-tillage cropping system effect on cotton growth and development using multi-temporal UAS data,” ISPRS Journal of Photogrammetry and Remote Sensing, 152, pp. 49-64, 2019.

- J. Enciso, C.A. Avila, J. Jung, S. Elsayed-Farag, A. Chang, J. Yeom, J. Landivar, M. Maeda, J.C. Chavez, “Validation of agronomic UAV and field measurements for tomato varieties,” Computers and Electronics in Agriculture, 158, pp. 278-283, 2019.

- Yang, Y., L.T. Wilson, J. Jifon, A. Landivar, J. da Silva, M.M. Maeda, J. Wang, E. Christensen, 2018. Energy cane growth dynamics and potential early harvest penalties along the Texas Gulf Coast. Biomass and Bioenergy 113, 1-14.

- J. Yeom, J. Jung, A. Chang, M. Maeda, J. Landivar, “Automated Open Cotton Boll Detection for Yield Estimation Using Unmanned Aircraft Vehicle (UAV) Data,” Remote Sensing, doi:10.3390/rs10121895, 2018.

- J. Jung, M. Maeda, A. Chang, J. Landivar, J. Yeom, J. McGinty, “Unmanned Aerial System assisted framework for the selection of high yielding cotton genotypes,” Computers and Electronics in Agriculture, 152, pp. 74-82, 2018.

- J. Enciso, M. Maeda, J. Landivar, J. Jung, A. Chang, “A ground based platform for high throughput phenotyping”, Computers and Electronics in Agriculture, 141, pp. 286-291, 2017.

- Chen, R., T. Chu, J.A. Landivar, C. Yang and M.M. Maeda. 2017. Monitoring cotton (Gossypium hirsutum L.) germination using ultrahigh-resolution UAS images. Precis Agric: 1-17.

- A. Chang, J. Jung, M. Maeda, J. Landivar, “Crop height monitoring with digital imagery from Unmanned Aerial System (UAS),” Computers and Electronics in Agriculture, 141, pp. 232-237, 2017.

- J. Goolsby, J. Jung, J. Landivar, W. McCutcheon, R. Lacewell, R. Duhaime, D. Baca, R. Puhger, H. Hasel, K. Varner, B. Miller, A. Schwartz, A. Perez de Leon, “Evaluation of Unmanned Aerial Vehicles (UAVs) for detection of cattle in the Cattle Fever Tick Permanent Quarantine Zone,” Subtropical Agriculture and Environments, 67, pp. 24-27, 2016.

- Tianxing Chu, Ruizhi Chen, Juan A. Landivar, Murilo Maeda, Chenghai Yang, Michael Starek. 2016. Cotton growth modeling and assessment using unmanned aircraft system visual-band imagery. Journal of Applied Remote Sensing. 10(3). http://dx.doi.org/10.1117/1.JRS.10.036018